The Bubble and the Long Game

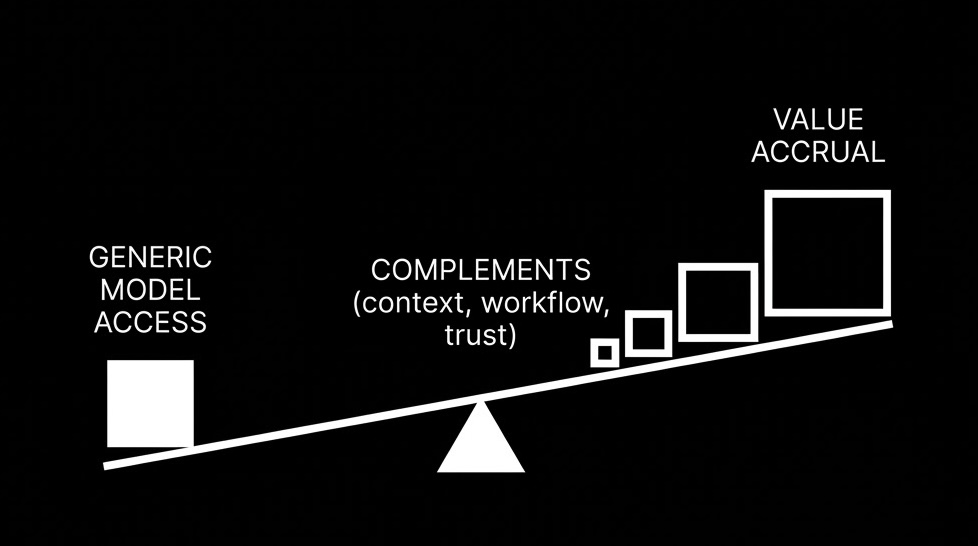

TL;DR >> The printing press took 60 years to become economically sustainable. LLMs are on a similar diffusion path—broad adoption, shallow integration. The winners won't have model access—they'll have the complements: context, workflow, trust. <<

Spending February and March 2025 in San Francisco, going to AI Engineer events in New York, being terminally online on X—it creates a special kind of anxiety.

Every week, a new model. Every month, a new capability. The scaling laws march on. The bitter lesson teaches us that compute wins. You watch the benchmarks, you watch the demos, you watch your timeline fill with announcements. And you think: everyone else is ahead. Everyone else gets it. I’m falling behind.

But here’s the thing: more than three years after ChatGPT launched, where are the transformational real-world examples?

Don’t get me wrong—I use AI daily. It’s changed how I code, how I write, how I think. But when I look for the economic revolution, the productivity explosion, the fundamental reshaping of industries… I mostly see experimentation. I see broad adoption but shallow integration. I see pilots and prototypes and proofs of concept.

I see the bubble. I’m not sure I see the transformation.

# What Gutenberg Actually Went Through

I was listening to Ada Palmer on the Dwarkesh Podcast about her book Inventing the Renaissance, and she told a story that stopped me in my tracks.

Not only did Gutenberg go bankrupt in the 1450s after inventing the printing press. So did the bank that foreclosed on him. So did his apprentices.

The problem wasn’t the technology—it worked. The problem was paper was still expensive. You had to make this massive upfront investment to print 300 copies of a book. But Gutenberg was in Mainz, a small landlocked German town where only priests were legally allowed to read the Bible. He’d print 300 copies and sell maybe seven.

The economics only worked when the technology reached Venice—because Venice was the airport hub of the Mediterranean. You could print 300 Bibles, give ten to each of thirty ship captains going to thirty different cities, and suddenly you had distribution. As Ada Palmer puts it: “It’s only when this technology ends up in Venice, where you can hand 10 copies to each of 30 ship captains going to 30 different cities, that it starts taking off.”

The printing press was invented around 1440. By 1500—sixty years later—printing presses across Europe had produced more than 20 million volumes. But the transformation? That took centuries. Literacy had to spread. Education systems had to be built. Distribution networks had to develop. Trust in printed text had to be established.

# The Diffusion vs. Invention Gap

Elizabeth Eisenstein, in her seminal work The Printing Press as an Agent of Change, makes a crucial distinction: inventing a technology and diffusing it through society are fundamentally different processes.

The printing press sharply reduced the cost of reproducing text. But its deepest effects emerged through a slower diffusion process: falling costs, organizational redesign, worker adaptation, trust systems, and infrastructure build-out gradually converting a technical breakthrough into a general-purpose social and economic technology.

Sound familiar?

LLMs are to cognition what the printing press was to copying. They lower the cost of drafting, summarizing, translating, coding, classifying, and recombining language. But the transformation won’t happen at the moment of invention. It happens through the long, slow work of institutional adaptation.

# What the Data Actually Shows

The OECD’s 2025 analysis argues that generative AI has the characteristics of a general-purpose technology—but also warns that, like earlier GPTs, it may show a productivity paradox: large gains do not appear immediately because they depend on complementary investments in skills, organizational change, and other innovations.

The current evidence mostly fits that interpretation.

The Stanford HAI Index 2025 reports that 78% of organizations used AI in 2024, up from 55% the year before. Generative AI attracted $33.9 billion in global private investment. Yet McKinsey finds that only 1% of executives describe their generative-AI rollouts as “mature,” and less than one-third of organizations follow most of the adoption and scaling practices associated with value capture.

In other words: broad adoption, shallow integration. Diffusion is happening. Transformation is not.

At the task level, LLMs already create measurable gains. A large NBER field study of 5,179 customer-support agents found that access to a generative-AI assistant raised productivity by 14% on average, with a 34% improvement for novice and low-skilled workers. The Federal Reserve notes a gap between worker-reported AI use (20-40%) and firm-reported use (5-40%), suggesting much early adoption is informal, bottom-up, and partly invisible to management.

This is what an early diffusion phase looks like: people use the technology before institutions fully redesign around it.

# Why the Bubble Feels So Real

The economics of use are improving at a pace that’s genuinely hard to process. Stanford reports that the inference cost of GPT-3.5-level performance fell more than 280-fold between November 2022 and October 2024. METR finds that the length of tasks frontier AI agents can complete with 50% reliability has been doubling roughly every seven months.

If you’re in the bubble—if you’re watching the benchmarks, reading the papers, using the new models the day they drop—it feels like everything is accelerating exponentially. Because it is.

But capability and affordability are improving faster than institutions can adapt. The pressure to adopt keeps rising even as today’s workflows remain clumsy and unreliable. We’re living through what the productivity J-curve predicts: task-level gains appear early, but economy-wide gains arrive later because firms need complementary investments in skills, organizational redesign, and new processes.

# The Complements Are Where Value Accrues

Here’s the insight that changed how I think about my own FOMO: the durable winners in the printing press era weren’t just the people who owned presses. They were the publishers, the distributors, the educators, the institutions that organized the flood of text.

With LLMs, the strongest opportunities for early adopters are likely to be in the complements around the models, not the models themselves.

The context layer. Firms that organize internal knowledge, permissions, metadata, and retrieval well will get much more reliable AI than firms with messy data. The moat is not the model—it’s the context layer around the model. Companies like Glean and Pinecone are building this infrastructure.

Vertical workflow software. The best businesses will be domain-specific: legal review, tax preparation, underwriting, clinical documentation, procurement. Generic chat is easy to copy; domain workflow is harder. Look at Harvey.ai in legal, or how Tempus is transforming clinical workflows.

The trust layer. Audit trails, evaluation, red-teaming, policy enforcement, provenance, compliance tooling. This layer becomes more valuable precisely when regulation tightens and incident risks rise. Companies like Arthur AI and Fiddler AI are building the governance infrastructure.

AI-native services. Because LLMs help novice workers disproportionately, they can compress apprenticeship and allow firms to redesign service delivery in consulting, support, operations, and research. Many service firms will quietly become “software-plus-judgment” businesses.

Private and efficient deployment. As model costs fall and open-weight systems improve, firms can justify secure, local, or sector-specific deployments. Part of the long-term opportunity isn’t just using AI—it’s making AI economically and operationally sustainable at scale.

# The Hard Truth About Counter-Arguments

Yes, LLMs have characteristics that could accelerate diffusion compared to historical technologies. They leverage existing digital infrastructure rather than requiring new physical systems. Natural language interfaces eliminate skill barriers. APIs enable immediate integration. ChatGPT reached 100 million users in two months—a pace that makes telephone adoption (75 years to 50 million users) look glacial.

And to be fair, there are real transformations already. Coding workflows have changed. Customer support has changed. Marketing and content operations have changed. A lot of knowledge work now has an AI-shaped step in the loop by default.

But that still feels different from an economy-wide transformation. I’m not looking for cool demos or teams that work faster with copilots. I’m looking for industry structure changing, for organizational charts changing, for productivity showing up outside case studies and conference talks.

The Stanford HAI Index reports that AI-related incident reports rose to 233 in 2024, a 56.4% increase over 2023. Standardized responsible-AI evaluations remain uncommon among major developers. The European AI Act began applying obligations for general-purpose AI models in August 2025, with enforcement powers starting in August 2026.

The constraint on diffusion is increasingly shifting away from raw capability and toward workflow design, trust, and coordination.

# What Petrarch Teaches Us About Playing the Long Game

Ada Palmer tells another story that I can’t stop thinking about.

Petrarch survived the Black Death in the 1340s, watched his friends die to plague and bandits, and said: our leaders are selfish and terrible. We need to raise them on the Roman classics so they’ll act like Cicero. So Europe poured money into finding ancient manuscripts, building libraries, and educating princes on classical virtues.

And those princes grew up and fought bigger, nastier wars than ever before.

But the libraries stuck around. The printing press made them accessible to everyone. And centuries later, some of the infrastructure built for one purpose ended up enabling completely different breakthroughs.

That’s the part that matters to me. Petrarch did not get the outcome he wanted on the timeline he wanted. But he helped create the conditions for outcomes he could not foresee.

# The Antidote to FOMO

The printing-press analogy suggests that LLMs will matter most not as standalone inventions, but as the foundation for a long reorganization of firms, professions, and institutions around cheap machine-generated cognition.

Their diffusion is likely to be uneven, delayed, and shaped by complements: skills, workflows, governance, infrastructure, and trust.

So here’s what I tell myself when the FOMO hits:

Stop panicking about model releases. The model is the printing press—you don’t need to own it. You need to own the distribution network, the trust infrastructure, the context layer, the workflow integration.

The early adopters with the greatest long-term advantage won’t be the first to use LLMs. They’ll be the first to turn them into dependable systems of production.

For me, that means spending less time doomscrolling model launches and more time learning how to build reliable systems around them. Better evals. Better context. Better human handoffs. Better workflow fit.

Play the long game. The bubble is real, but the transformation is the decades-long project. That’s where the real value accrues: not in the hype, but in the slow, patient work of building the complements that make the technology actually work in the real world.

Inspired by Ada Palmer’s appearance on the Dwarkesh Podcast discussing her book “Inventing the Renaissance.”